Speed has become the modern scapegoat. Whenever systems break, whenever anxiety rises, whenever institutions fail to adapt, the reflex is always the same: slow down. Slow technology. Slow innovation. Slow progress. The idea sounds ethical, even wise, but it hides a deeper refusal to engage with reality as it is. Complexity is already accelerating. Information flows faster than our institutions. Biological stress accumulates faster than our cultural narratives can process it. Slowing down does not resolve this tension. It only postpones collapse.

This is where effective accelerationism (e/acc) begins. Not as a provocation, but as a diagnosis. Acceleration is not a choice we make, it is a condition we live in. The question is not whether to accelerate, but whether we do so blindly or intelligently. Effective accelerationism argues that advanced technologies such as AI, sensing, and systems intelligence must be deliberately accelerated to solve problems that incrementalism can no longer touch. Health systems that react instead of predict. Emotional suffering addressed only after damage is done. Governance models built for linear worlds, struggling inside exponential ones.

I have always been skeptical of the moralization of slowness. “Slowing down for humanity’s sake” often means protecting systems that already fail millions of people every day. Inefficient healthcare. Reactive mental health frameworks. Decisions driven by intuition, ideology, or legacy power rather than evidence. Speed, when guided by intelligence, is not anti-human. It is how we restore agency inside complexity.

There is a forgotten convergence between effective accelerationism and technocracy. Before technocracy was diluted into a political label, it described a simple principle: decisions should be grounded in measurement, expertise, and feedback, not charisma or belief. Complex societies cannot be governed by static rules. They require adaptive systems that sense, learn, and respond. Acceleration without technocratic intelligence becomes chaos. Technocracy without acceleration becomes bureaucracy. The future demands both.

Nowhere is this imbalance clearer than in how little we actually measure about ourselves. We track markets, logistics, and engagement with astonishing precision, yet we remain largely blind to our own internal states. Stress, emotional overload, burnout, attention collapse. These are treated as subjective experiences, discussed only after they turn into pathology. AI changes this relationship. For the first time, human data can be analyzed longitudinally, in real time, and at scale. Nervous system activity, emotional patterns, recovery dynamics. If we can measure it, we can model it. If we can model it, we can intervene before breakdown becomes identity.

This is not theoretical for me. It directly informs what I build. Emotitech exists because emotions are not abstractions. They are biological processes, electrical signals, hormonal fluctuations, and behavioral outputs. Accelerating the development of new biosensors and affective AI is how we move from emotional guesswork to emotional intelligence. Not therapy after collapse. Not mindfulness as a coping narrative. But systems capable of understanding the human state early enough to matter.

There is an uncomfortable truth embedded here: measuring humans is a political act. What we choose to sense, how we interpret it, and who controls that data will shape future power structures. Today, technology measures attention to optimize advertising. Tomorrow, it can measure stress to redesign work, healthcare, cities, and education. Biosensors are not accessories. They are instruments of governance for the body and mind.

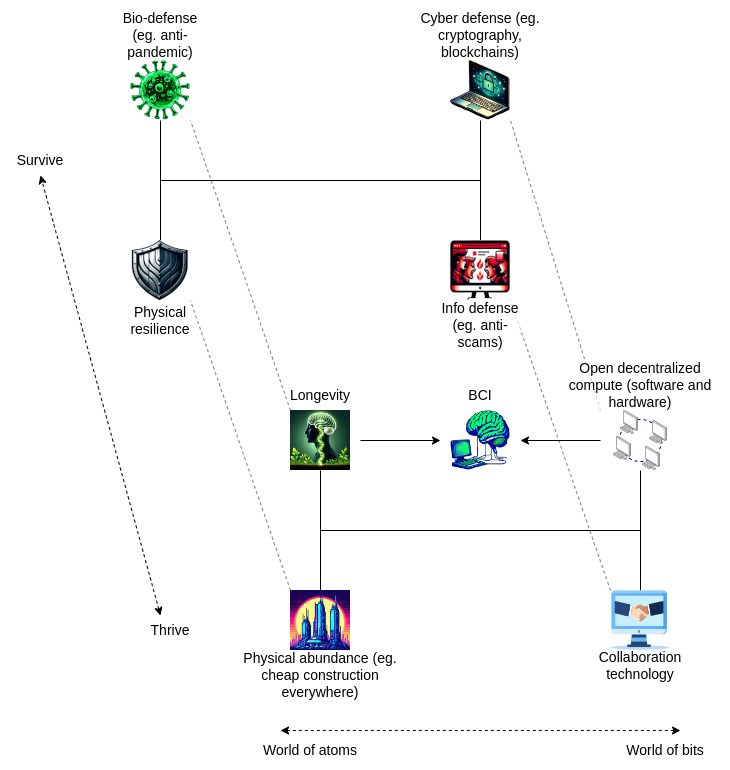

A contemporary expression of this thinking can be found in Vitalik Buterin’s concept of d/acc, decentralized or defensive accelerationism. Vitalik Buterin does not argue for speed as spectacle, but for acceleration that strengthens human agency, resilience, and collective intelligence rather than concentrating power. In this view, the goal of acceleration is not domination, but robustness. Technologies should scale in ways that reduce single points of failure, empower individuals, and align incentives toward long-term human outcomes. This framing is crucial, because it reframes acceleration as a protective force rather than an extractive one. Applied beyond crypto, d/acc becomes a blueprint for how AI, biosensing, and human data systems should evolve: decentralized, privacy-aware, interpretable, and oriented toward preventing harm before it emerges. Acceleration, here, is not reckless speed, but structured intelligence moving faster than the risks it creates.

Effective accelerationism is often portrayed as cold or ruthless. I see it as an ethic of care suited for a complex world. What is more humane, letting broken systems fail slowly while we debate values, or building better tools fast enough to reduce suffering? Speed is not the enemy. Slowing down will not save us. Intelligence, measurement, and intentional acceleration might.